We pickup a lot of clients that have had bad experiences with other IT resellers. As a result we get to see some pretty strange configurations that people have made which adversely affect the clients environment. Here’s a few that I’ve seen with ShadowProtect that really should NOT be done.

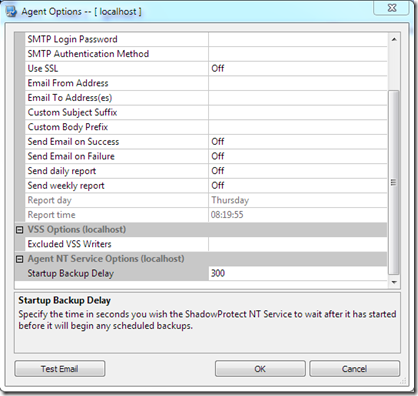

Running missed jobs automatically – this is an option within ShadowProtect that allows you to run a missed job automatically when ShadowProtect services start and also another to Auto Execute missed tasks . In principle this sounds like a good idea, however in practice it can be a problem. Take the example of the server that has “other issues” not related to ShadowProtect – this server would reboot with a BSOD not related to StorageCraft at all (will do another blog post on that later). Now this server would crash in such a way that many things would not work correctly and then finally it would BSOD. When it rebooted, along with the normal startup of things on the server, it would also therefore trigger the backup jobs it missed which further increases the load on the server. End result is that the server is brought to it’s knees for quite some time after it comes up as the disks get hammered like crazy. Therefore there are two things you need to do to rectify that. Firstly under Options > Agent Options – see the “Start Backup Delay” option – it’s default is 60 seconds – this means it will start any backups with ShadowProtect 60 seconds after the ShadowProtect services starts up – I recommend changing it to 300 seconds or 5 minutes. This gives the server a chance to startup, settle nicely before you allow any backup jobs to start.

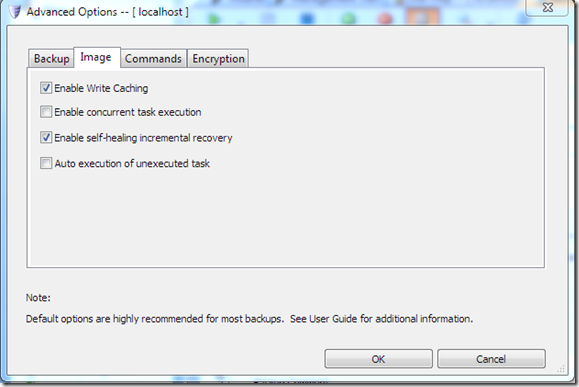

The second option I HIGHLY recommend you NEVER use, is the “Auto execution of unexecuted task” option. This is specified in the Advanced Options of each individual backup job. If you enable this, then it will automatically run whatever backups are missed when a system restarts. Take the example above of the server that would BSOD – it would therefore start up and within 60 seconds of the server starting, it would run the missed backup jobs. This would place EVEN MORE load on the server and cause more problems still. Note that this feature is there to run backups that are missed regardless of the reason, so if you have your server shutdown for other maintenace, power outage etc, it will also automatically run backup jobs after it comes back up.

The third option I suggest you NEVER use is the “Enable concurrent task execution” – this allows for multiple jobs to be run AT THE SAME TIME. Now this might be fine if you have a massively high performance disk array, but it’s not something you want to do at all for the majority of servers as it will cause a server to freeze at times. End result will be that the client will likely power the server off which is even worse.

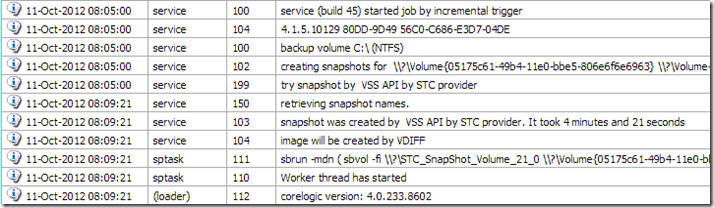

Bad Disk Configuration – this is something we see often. An example of this is a server with a HP B110i SATA RAID controller with NO additional caching. This server had 2 x 2TB drives in a RAID 1 configuration with no caching at all on the controller. Furthermore the server was configured with 3 partitions on the disks which is normally not an issue either. What compounded the issue further still was that there was a single backup job created to allow all three partitions to be backed up at once. This is a big mistake on a machine configured like this. You can see in the log below, that the backup starts at 8:05 in this instance, and it takes 4 minutes and 21 seconds to do the VSS Snapshot – during this time the server effectively pauses many of it’s operations so that it can snapshot the current state of the server. Why does it take so long? It takes so long because it’s trying to snapshot 3 partitions of information back to itself, therefore the disk IO is massively overloaded.

Solutions here could include,

- Add a Hardware RAID Cache to the controller

- Create three individual backup jobs configured to run at different non conflicting times

- All of the above

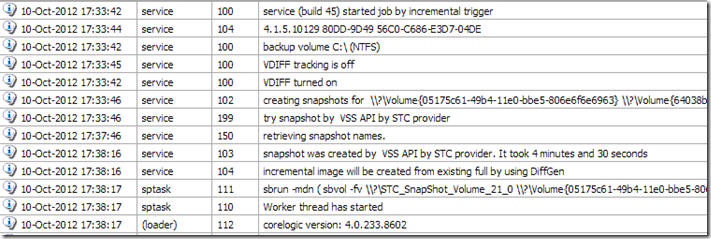

BSOD or Hard Powering off of the server – at all costs you need to avoid this. StorageCraft has some cool methods to track the changes made to the disks while the server is running (won’t go into it here). But these methods rely on the server being shutdown correctly rather than crashing. If the server is powered off correctly, then it can use the cool technology within ShadowProtect to create the image using a VDIFF which is very fast and the best way to minimise the backup time. You can see above that the backup shown is being created by VDIFF by the line “image will be created by VDIFF” – this tells you that it’s the fastest way StorageCraft can do the image. If however you have the server BSOD, or you power it off with the power button, then the information StorageCraft needs to create the VDIFF backup is lost. It instead reverts to a DIFFGEN. You can see this in the log file below “Incremental image will be created from existing full by using DiffGen”

When an image is created by DiffGen, it compares the existing backup image chain with what is on the disk right now to determine what has changed and then create an incremental backup. This process is EXTREMELY slow as it has to read and compare each sector across the entire image chain. If you happen to be watching the Time remaining counter or the speed counters within ShadowProtect when it’s doing a DiffGen, you will see they fluctuate wildly as it goes over and over the image chain. Once it has completed this backup however, it will revert to doing a VDIFF backup once again.

Now – imagine if you will a clients server with pretty much ALL of the above issues at once… yeah… you get idea. It’s pretty easy to configure a great product like ShadowProtect VERY badly and create a massively bad impression on the client. From what I’m told, the previous IT provide blamed the product for all of these failings. Funny though that once we resolve them as best we can, the server performs much better (still not great due to the disk configuration) but much better and stable too!

Howdy! Would you mind if I share your blog with my myspace group?

There’s a lot of people that I think would really appreciate your content.

Please let me know. Thank you

The best thing that you can do is to think back to when you and your boyfriend first got

together. Also, building it is probably much easier than you might expect.

You will be the envy of all of your close friends and family members when

they see you walking around with your new telephone and locate out that you ended up in a

position to get it for free.